Have you installed SQL Server 2016, enabled Database Mail and not been able to send a test email? Does the email queue show a list of queued mail items but no entries in the Database Mail log? This may help.

I installed SQL Server 2016 with CU1, used a domain user as the owner of the SQL Server accounts and gave that domain user local administrator privileges. I turned on database mail and created a profile and email account to send mail. When I attempted to send a test email, no test email ever arrived. Hmm, time to start troubleshooting.

First, I checked the Database Mail log. This was accomplished by going to the Object Explorer window, expanding ‘Management’ and right clicking on ‘Database Mail’ and choosing ‘View Database Mail Log’:

How to view SQL Server Database Mail log

No entries. Hmmm.

Next was to see what MSDB thought about the process. Querying MSDB using the built-in mail stored procedures and system tables yielded little results:

-- This one told me that Database Mail was started EXEC msdb.dbo.sysmail_help_status_sp; -- Here I learned that there were 5 items queued and the last times I tried sending mail EXEC msdb.dbo.sysmail_help_queue_sp @queue_type = 'mail'; -- This confirmed none of the email was sent SELECT * FROM msdb.dbo.sysmail_sentitems; SELECT * FROM msdb.dbo.sysmail_unsentitems; -- Is Service Broker enabled? It has to be to send mail SELECT is_broker_enabled FROM sys.databases WHERE name = 'msdb'; -- I tried stopping and restarting the Database Mail exe EXEC msdb.dbo.sysmail_stop_sp; EXEC msdb.dbo.sysmail_start_sp; |

The domain account responsible for running the SQL Server service and agent was a local administrator. I made it an instance administrator and restarted the instance. Still no success. And since I (and the service accounts) were both in the ‘sysadmin’ server role, permissions and ownership in the MSDB database role ‘DatabaseMailUserRole’ were not the issue.

My last attempt was to check the Database Mail executable directly. A Windows search for ‘databasemail’ showed it to be in “C:\Program Files\Microsoft SQL Server\MSSQL13.MSSQLSERVER\MSSQL\Binn”. Double clicking (or right click and ‘Run As Administator’) the executable showed me that .Net 3.5 was not installed:

.Net Framework 3.5 is not installed

Aha!

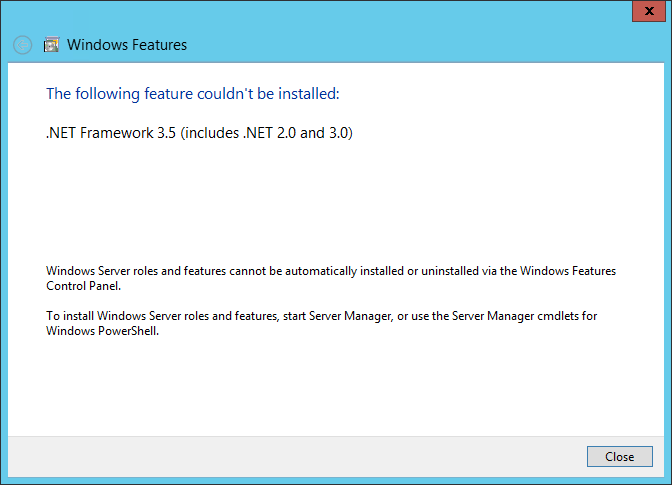

This was a Windows Server 2012 R2 box, so I had to add .Net 3.5 framework. Which meant I had to dig up the Windows install media (there may be ways to do it without but I didn’t dig that deep in Bingle).

Opening Control Panel and choosing ‘Turn Windows features on or off’ brought up the wizard.

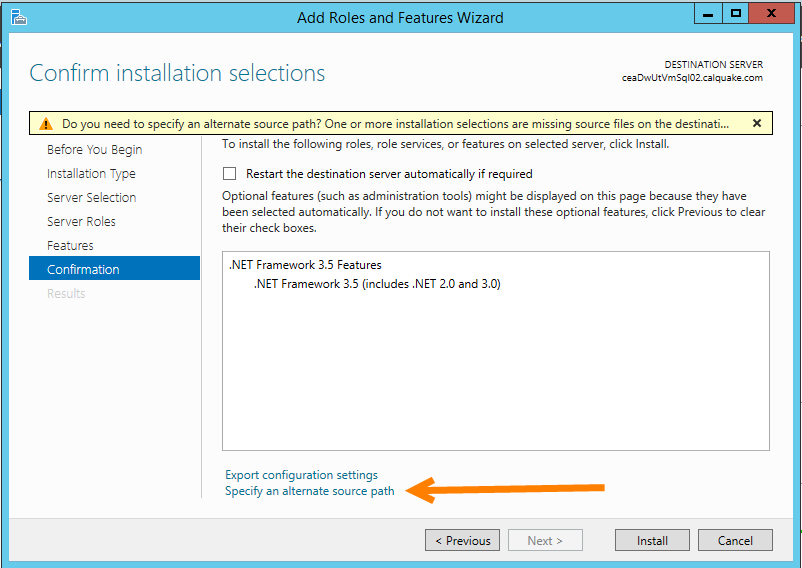

Choose ‘Role-based or feature-based installation’ > ‘Next’. Select the server and click ‘Next’. Leave all boxes blank on the ‘Select server roles’ screen and choose ‘Next’. On the ‘Select features’ screen, check the box for ‘.Net Framework 3.5 features’ and click ‘Next’.

The ‘Confirm installation selections’ screen will have a warning at the top beginning with ‘Do you need to specify an alternate source path?…’. Below the big white box in the middle of the windows, click the link ‘Specify an alternate source path’; this brings up a new window. Put the path to the Windows media. For me, I’d mounted the disc to E:\, my source path was ‘E:\sources\sxs’.

Supply alternate source path

Click ‘Install’ (the above came from https://technet.microsoft.com/en-us/library/dn482071.aspx, which claims not to be maintained).

Once .Net 3.5 was installed, I went back and double clicked the ‘databasemail.exe’ from above. All my mail that was queued was delivered. And all test emails were now sent successfully.

These help with troubleshooting:

https://technet.microsoft.com/en-us/library/ms187540(v=sql.105).aspx

https://technet.microsoft.com/en-us/library/ms190630(v=sql.105).aspx

This one has a comment at the end that helped point me in the right direction and says that CU2 may resolve the above issue:

http://dbatasks.blogspot.com/2012/11/troubleshooting-database-mail-part-ii.html